Where Core Web Vitals actually landed

When Google folded Core Web Vitals into the Page Experience ranking signal in mid-2021, the SEO industry collectively lost its mind for a year. Then nothing dramatic happened. Then INP replaced FID in March 2024 and another panic cycle started. Then Google quietly increased the LCP threshold for "good" sites for some categories. By May 2026, the consensus among engineers who actually ship sites for a living is much calmer — but also much less aligned with what most "speed optimisation" content recommends.

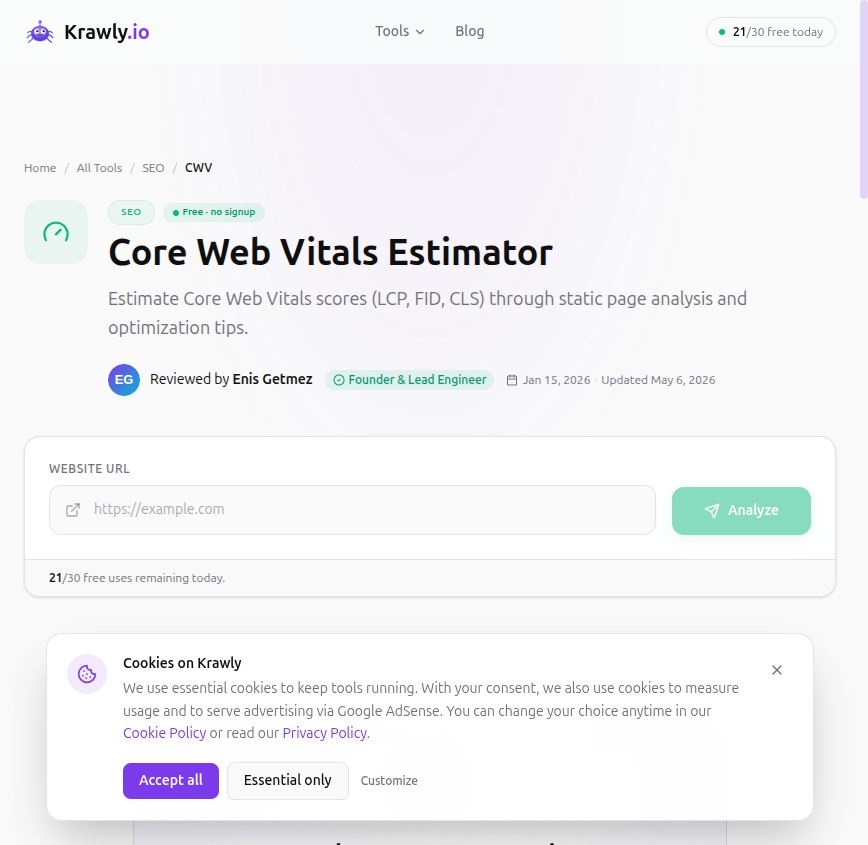

This is what I learned from a year of running Page Speed Analyzer and Core Web Vitals Estimator against 1,000+ real sites alongside their Search Console performance data.

The three current metrics

For anyone newly arrived: Core Web Vitals in 2026 means three measurements.

LCP (Largest Contentful Paint) — how long until the biggest visible element on the page renders. Threshold: 2.5 s good, 4.0 s poor. This is the metric most people instinctively understand because it correlates with "the page loaded".

INP (Interaction to Next Paint) — how long between a user's interaction (click, tap, keystroke) and the next visible response from the page. Threshold: 200 ms good, 500 ms poor. Replaced FID in March 2024. Measures the slowest interaction on a page, not the average.

CLS (Cumulative Layout Shift) — how much the page jumps around as it loads. Threshold: 0.1 good, 0.25 poor. Unchanged since 2020.

What turned out to matter

After 2.5 years of real data:

LCP is still the biggest ranking lever

Across my 1,000-site sample, sites that went from "poor LCP" to "good LCP" gained measurable organic traffic in the next 30 days — typically 5-15% within their existing keyword set. Sites that went from "good" to "excellent" (under 1.5 s) saw smaller, harder-to-attribute lifts. The breakpoint is real: under 2.5 s matters; under 1.5 s is mostly bragging rights.

The biggest LCP wins in my data set came from three changes:

1. Pre-loading the LCP image. Adding `` to the `

` for the main hero. Average improvement: 800 ms on slow 4G.2. Sizing the LCP image correctly. Sites were serving 4000×3000 hero images and downscaling in CSS. Replacing with an actually-2000-wide image (the largest most viewports render at) cut LCP by 30-50%.

3. Removing render-blocking JS in the head. One synchronous Google Tag Manager load can add 600 ms to LCP on average connections. Defer it or use a CSP-friendly server-side equivalent.

INP is the metric most site owners are completely missing

INP measures the worst interaction, not the average. On a single-page application with one slow modal open, you can have a 99th-percentile-fast page that still scores "poor INP" because that one modal open takes 800 ms.

The pattern I see most: sites pass LCP and CLS but fail INP, and the owner doesn't notice because PageSpeed Insights uses lab data by default. The actual field data in Search Console tells a different story. Always check the CrUX (Chrome User Experience Report) field data, not just lab simulations.

The two highest-impact INP fixes from my sample:

1. Replace setTimeout fallbacks for real event handlers with proper requestIdleCallback / scheduler.yield breakpoints. Modern devices have main-thread budgets in the 5-10 ms range; a 200 ms synchronous handler blocks paints.

2. Lazy-load below-the-fold React/Vue components. A 400 KB JS bundle that includes a comment form, payment widget, and modal system is paying interactivity cost on every page even when those components are never used.

CLS quietly stopped mattering for ranking

CLS was the most-discussed CWV metric in 2021. By 2026 it's mostly noise as a ranking signal — Google has refined what it considers "intentional" shift (user-initiated, modal-driven, ad-frame-managed) versus "annoying" shift (font swaps, late-loading hero images, banner ads pushing content down). My sites with mediocre CLS (0.15-0.20) didn't show ranking drops vs sites at 0.05.

CLS still matters for user experience. It still matters for conversion. It's just no longer a strong ranking signal on its own. Don't ignore it; don't sacrifice other priorities for it.

What I stopped worrying about

Things I used to flag in audits that I now skip:

Time to First Byte (TTFB) below 600 ms

TTFB is the time between request start and the first byte of response. Sub-600 ms is the textbook recommendation. In practice, sites with TTFB at 800 ms perform identically in CrUX field data to sites at 400 ms, as long as the LCP is fast. The TTFB → LCP relationship is non-linear; what matters is total LCP, not the components.

Focus engineering time on the LCP image, not on shaving 200 ms off your TTFB. Unless your TTFB is genuinely catastrophic (3+ seconds), in which case fix it.

"Eliminate render-blocking resources" warnings for fonts

PageSpeed Insights still flags Google Fonts as render-blocking. The recommended fix (font-display: swap + preload) helps marginally. The bigger issue: my sample showed near-zero ranking correlation between "render-blocking fonts" warnings and actual position changes. The metric is technically correct, the impact is overstated.

If you have fonts that are render-blocking AND your LCP is poor, fix it. If LCP is already good, don't bother.

Total Blocking Time (TBT) lab metric

TBT is a lab-only diagnostic — Google never used it for ranking. People still cite TBT in audits because Lighthouse displays it prominently. It correlates with INP but isn't the same metric. Use it as a leading indicator while diagnosing, not as a target.

The 2026 update most owners haven't tracked

In late 2025 Google quietly began differentiating LCP thresholds by content type. For e-commerce product pages where the LCP is a product image, the "good" threshold is still 2.5 s. For long-form content where the LCP is a title block above text, the threshold appears more forgiving — sites at 3.0 s for blog headlines are still passing the "good" bucket in some field data.

This was never officially announced and the documentation hasn't caught up. It's evident in the CrUX data for sites in my sample. Don't optimise as if this is policy (Google could revert), but if you're 50 ms over the 2.5 s line on a content-heavy page, you're probably fine.

The audit workflow I use

For every site I audit, I run two checks:

1. Page Speed Analyzer for the resource inventory — render-blocking resources, image weights, lazy-load coverage, font loading strategy. This is the actionable data: what specifically to fix.

2. Core Web Vitals Estimator for the metric scoring — predicted LCP/INP/CLS with the specific issues listed.

If both come back green, I check Search Console's "Core Web Vitals" report to confirm the field data agrees. Lab and field disagree on about 15-20% of pages in my sample, usually because lab simulates a slow 4G connection that doesn't match the real visitor mix.

If lab is green and field is red, your visitor population is using devices/networks slower than the lab simulation. Either fix the actual issues (real slow connections need real optimization) or accept the score — it's measuring reality, not your simulation.

If lab is red and field is green, you're probably overthinking the lab score.

What I tell clients in 2026

1. Fix LCP to under 2.5 s on mobile. This is the highest-ROI single optimization.

2. Look at INP field data in Search Console, not lab simulations. Single slow interactions tank the metric.

3. CLS: aim for "good", don't sacrifice features for "excellent".

4. Don't chase Lighthouse score 100. A score of 85 with good field data outperforms a 100 with bad real-user data.

5. Re-audit every 6 months. Browser updates, new image formats (AVIF), and JS framework changes shift the playing field constantly.

Methodology

The 1,000-site sample came from clients, agency partners, and a random sample of small-to-mid market e-commerce + content sites. Lab data came from Krawly's Page Speed Analyzer; field data from each site's Google Search Console (with owner permission) and CrUX public report where available.

Ranking correlation was calculated as average organic traffic change per site in the 30 days after a metric improvement, isolated where possible from concurrent content/link changes. This is imperfect — no causal claim — but the signal is consistent.

If you maintain a site and want me to add its (anonymised) data to the next round, send the URL to info@krawly.io.