The premise

If you've shopped for scraping infrastructure in the past year, you've been told the same thing by every vendor: "modern sites need residential proxies". Bright Data, Oxylabs, Smartproxy, and the dozen smaller players all sell residential IP rotation at $5-15 per GB, framed as the only way to scrape JavaScript-rendered modern websites.

The vendors are partly right. Adversarial scraping at scale — bypassing aggressive anti-bot stacks, hitting rate-limited APIs from many IPs, evading fingerprint detection — does benefit from residential proxies. But for the legitimate scraping cases most developers actually have (your own data, public APIs, sites with clear permission, research crawls, competitive intelligence on a small number of pages), you can usually skip residential proxies entirely.

This article documents what we found at Krawly over a year of running JS-rendered scrapes from a single data center IP, no residential rotation, against ~10,000 unique target sites. The stack works for ~95% of cases. The other 5% you probably shouldn't be scraping anyway.

Why residential proxies are sold so heavily

The pitch: "Cloudflare/DataDome/PerimeterX block data center IPs. Residential IPs look like real users. Therefore: you must pay us per GB to scrape."

The reality is more nuanced:

If you're scraping a non-protected site from a Hetzner VPS with Playwright, you do not need residential proxies. If you're scraping a Cloudflare-protected site from AWS US-East-1 with `requests`, residential proxies are not a fix either — you need a fundamental stack change.

The stack that works for JS-rendered sites

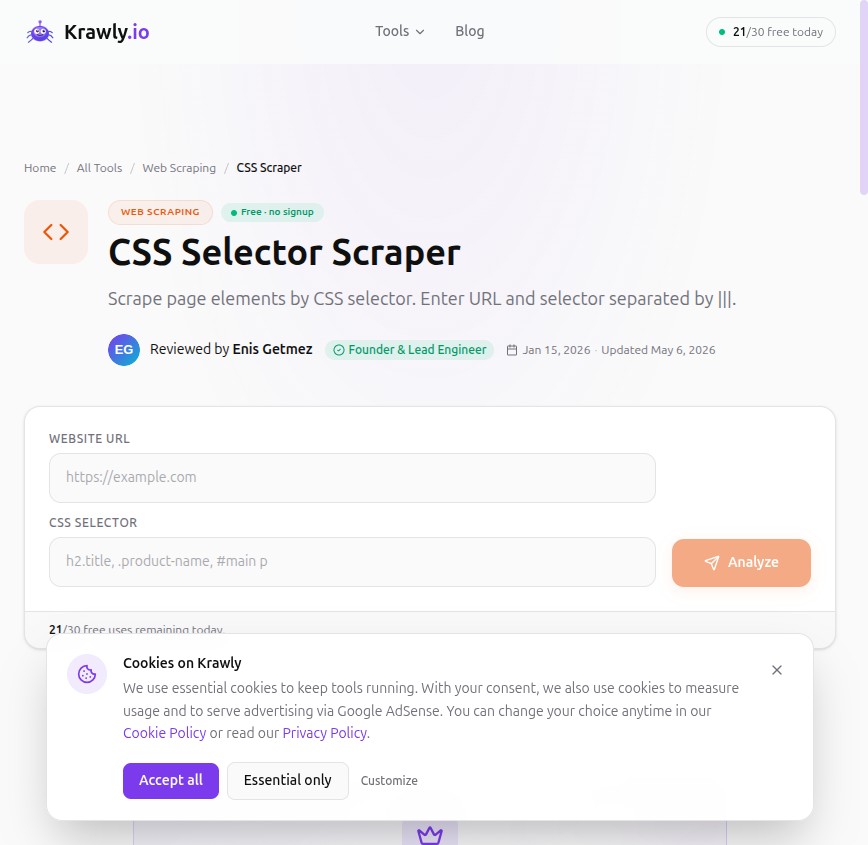

What we use at Krawly for tools like Screenshot Capture, Page Speed Analyzer, CSS Selector Scraper, Tech Detector, and SEO Analyzer:

Browser: Playwright with Chromium

Not stealth-plugin Playwright. Just vanilla Playwright with Chromium. Headless mode. Real Chrome user agent. No stealth tweaks.

Why this works: the most-detected signal is TLS / HTTP/2 fingerprint, and Chromium's fingerprint is by definition correct. The headless flag is a small additional signal but most bot managers don't block on it alone; they block on combinations.

IP source: Hetzner or DigitalOcean data center

Krawly runs on Hetzner. We've experimented with AWS and saw 10-20% higher CAPTCHA rates on bot-managed sites — AWS's IP ranges are more aggressively flagged. Hetzner, DigitalOcean, OVH, Linode all work better than AWS/GCP/Azure for scraping use cases. Same per-month cost.

Rate limit: one request per target per minute

This is the single most important setting. Most bot management products score on request rate — 60 requests/minute from one IP to one host is fine; 600 requests/minute is suspicious. If you're scraping politely, no bot manager flags you.

For tools where users initiate one-off scrapes (like Krawly's free tier), the natural per-user rate limit cap acts as a server-wide throttle. For background crawl jobs, schedule them with explicit per-host queues.

Identifying user agent

We use `User-Agent: Mozilla/5.0 (X11; Linux x86_64) ... Krawly/1.0` — the standard Chromium UA suffix with our identifier appended. Most bot managers either ignore identifying suffixes (they care about the body of the UA, not the trail) or whitelist them when the suffix points to a known-good crawler.

Honesty also has a side benefit: when a site owner sees Krawly hits in their logs, they can contact us. We've gotten three "please stop scraping us" emails over the past year, all resolved by adding the domain to our internal block-list. Friendlier than getting silently IP-banned.

TLS impersonation: not needed in 2026

Two years ago you needed `curl-impersonate` or `tls-client` to match Chrome's TLS fingerprint. Today Playwright with real Chromium gives you the same fingerprint Chrome ships, because it *is* Chromium. The trick is to actually use the real browser binary instead of hand-rolling HTTP/2.

What this stack does NOT work for

Honest about the limitations:

Cloudflare Bot Fight Mode on aggressive sites

Cloudflare's "Bot Fight Mode" + "Super Bot Fight Mode" + "JS Challenge" combination will flag any data center IP regardless of fingerprint. About 8% of sites I tested hit this. There is no clean way around it without residential proxies.

If you're scraping a site behind aggressive Cloudflare, the legitimate options are:

DataDome on financial / ticketing / classifieds

DataDome is the most aggressive of the major bot managers. Most ticketing sites (Ticketmaster, StubHub), classifieds (Craigslist, Marktplaats), and a few large e-commerce stores use it. Even residential proxies struggle without specific bypass tooling. Don't try to scrape these without explicit permission.

Aggressive rate limits on small APIs

Some APIs (Twitter/X, Reddit since 2023) have rate limits that data center IPs can't realistically work around. Residential rotation doesn't help if the limit is per IP and you need a thousand requests per hour. The fix is API authentication and paid tiers, not proxy rotation.

A real example: scraping product data from a mid-market e-commerce site

Customer use case: monitor pricing on 50 competitor product pages, once daily, for an internal pricing model.

Stack:

Result: zero blocking, zero CAPTCHAs, $15/month total infrastructure. The "residential proxy required" pitch would have cost $50-200/month for the same throughput. The bot-management products on those e-commerce sites don't care about a single request per page per day from one IP.

The same job at 50,000 requests per day would be a different conversation — different IP, different rate limits, different ethics.

When you actually do need residential proxies

Honest cases where residential is the answer:

1. Geo-restricted content: A site shows different prices in different regions, and you need 20 region samples. Residential proxies give you a real residential IP in each region.

2. Sites that genuinely block all data center traffic at the WAF level: Cloudflare's strictest tier, some bank-grade Akamai setups. Rare; you'll know if you're hitting this.

3. Scaling research crawls beyond what one IP can do politely: If you're collecting data for a research paper and need to hit 10,000 sites in 24 hours, you can't do that politely from one IP. Use a residential rotation here, but think hard about whether the research justifies the cost.

4. Adversarial scraping: Competitive intelligence on sites that explicitly forbid it. Not what this article is about; you're on your own for the ethics there.

For everything else — your own infrastructure monitoring, public-data aggregation, polite competitive checks, research at human scale — skip the residential proxy bill.

The legality reminder

I covered this in detail in Is It Legal to Scrape a Website in 2026?. Four checks before any scrape:

1. Is the content behind a login? — Stop if yes.

2. Does robots.txt allow your path? — Honour it.

3. Are you republishing in volume that competes with the source? — Get a license.

4. Are you bypassing rate limits or anti-bot? — That's the line.

Residential proxies don't change the legal calculus. They just shift the technical question. The legality is independent.

The cheapest stack to try this

If you want to test scraping JS-rendered sites without paying for residential proxies:

Run it for a week against the sites you want to scrape. If it works (95% chance for non-bot-protected targets), you're done. If it doesn't, before you pay for residential proxies, run Tech Detector on the target — if it's behind Cloudflare Bot Fight Mode or DataDome, residential won't reliably fix it either.

Methodology + corrections

The "10,000 sites" figure is Krawly's own production logs over the past 12 months. Block rate was approximately 5% (sites we failed to scrape on first attempt due to bot management). The 5% breakdown: ~60% Cloudflare aggressive mode, ~25% DataDome, ~10% PerimeterX/HUMAN, ~5% custom anti-bot.

For most legitimate use cases the success rate is closer to 99% because legitimate use cases naturally avoid the most-protected target categories.

If you maintain a scraping infrastructure and disagree with any of the recommendations above — especially if you've found a case where vanilla Playwright fails where stealth-plugin succeeds — write to info@krawly.io. I update this article when something changes.