A confession

I've shipped SEO Analyzer and it gives every URL a score from 0 to 100. The score is useful. The score is also, like every other SEO tool's score, a heuristic. It is not a measurement of how Google ranks the page.

After two years of running my own SEO tools alongside paid ones (Ahrefs, SEMrush, Ubersuggest, Moz, Screaming Frog) and comparing the scores against actual Search Console performance data, I've stopped trusting any of them as a direct ranking signal. Here is why — and what I actually look at when a client asks "how is my SEO?".

A note on bias: I run Krawly. Half of the SEO tool scores I'm criticising in this article are ours. The criticism applies equally.

What SEO tool scores actually are

Almost every SEO score works the same way:

1. Run 10-50 checks on a page (or site).

2. Weight each check by how important the vendor thinks it is.

3. Sum the weighted scores and normalise to 0-100.

The weights are picked by the vendor's engineering team based on what they think correlates with ranking. They are not validated against Google's actual ranking algorithm — Google doesn't publish weights, and no third party can reverse-engineer them with any precision.

So when you see "SEO score: 72", you are seeing one vendor's opinion of how 50 checks should be combined into one number. A different vendor's tool might score the same page at 84 or 61. Both are technically right; they're answering different versions of the same question.

The four metrics that are mostly noise

After cross-referencing scores from five tools with Search Console rank data for 200 client pages, four metrics turned out to have weak-to-no ranking correlation:

1. "Domain Authority" / "Domain Rating" / vendor-specific authority scores

Ahrefs DR, Moz DA, SEMrush AS — all proprietary scores that estimate how strong a domain is in their backlink index. They are useful for comparing two domains in the same niche (a DR 40 site is meaningfully stronger than a DR 20 site). They are useless for predicting rankings on a specific keyword for a specific page.

Pages on DR-30 sites regularly outrank pages on DR-70 sites in my sample. Not occasionally; consistently. Page-level content quality matters more than domain authority for most queries.

2. Composite "SEO Score" out of 100

The number every tool puts at the top of the report. Across my sample, the correlation between "SEO score improvement" and "ranking improvement" was roughly 0.15 — barely above random. Some pages saw a 30-point SEO score increase with zero rank change. Some pages saw rank improvements while the SEO score went down.

What this tells you: the score is internally consistent (the same tool gives similar pages similar scores) but doesn't actually predict what Google does. Use it as a high-level diagnostic, not a target to optimise.

3. Word count alone

The "minimum word count for ranking" myth refuses to die. Across my sample, page word counts ranged from 250 to 4,500 words, and word count correlation with rank position was 0.21 — present but weak.

What did correlate: word count for queries where the user clearly expects long content. Tutorial queries reward 2,000+ word pages; transactional queries don't. Generic "longer = better" guidance is wrong.

4. "Mobile-friendly" boolean

Almost every tool reports "is the page mobile-friendly: yes/no". This was a strong signal in 2016. In 2026, with mobile-first indexing standard and responsive design near-universal, nearly every page passes. The signal is too coarse to differentiate.

What still matters: specific mobile issues (text under 12px, tap targets under 48×48px, content wider than viewport). The "yes/no" rollup hides these; you need the individual line items.

The four metrics I actually trust

What does correlate with ranking, based on the same sample:

1. Search Console impressions (lagging indicator, but cleanest)

Search Console's impressions count is the ground truth: it's not an estimate, it's what Google actually showed. The number of impressions per keyword tells you how Google thinks about your relevance for that query. Tracking this monthly per page is the single most reliable SEO measurement.

Caveat: impressions lag clicks by 1-2 weeks and lag content updates by 4-8 weeks. Don't expect day-after results.

2. CTR by keyword position (compare against benchmark)

For any given query position, there's a benchmark CTR — position 1 averages ~28%, position 5 averages ~6%, position 10 averages ~2.5%. If your CTR at position 5 is 4%, your title or meta description is underperforming and you can lift CTR (and therefore eventual rank) by rewriting them.

This is more actionable than any SEO score because it tells you exactly what to test: snippet copy.

3. INP (Interaction to Next Paint) field data

Of the Core Web Vitals, INP correlated most strongly with rank in my sample (0.34 — moderate but real). Pages with INP under 200ms held position better through algorithm updates than pages with INP over 500ms.

LCP and CLS correlated too, but more weakly. INP is the one to optimise first if you're picking battles.

4. Specific schema.org / structured data correctness

Pages with correct, complete structured data (Product, Recipe, Article, FAQ, HowTo) earn rich snippets that drive CTR up by 20-50%. The "schema present: yes/no" tool roll-up is useless; the specific field-level validation matters.

For my recent audit of 50 e-commerce sites' product schema, I showed 8 specific schema mistakes that consistently suppress rich snippets. None of them are caught by any "SEO score". All are caught by Structured Data Validator.

The audit workflow I use now

Instead of "what's my SEO score":

1. Search Console → Performance → Pages: which of your pages get impressions? Which have rising or declining impressions over 90 days?

2. For top 10 pages: check CTR vs position benchmarks. Pages with low CTR for their position have a title/meta opportunity.

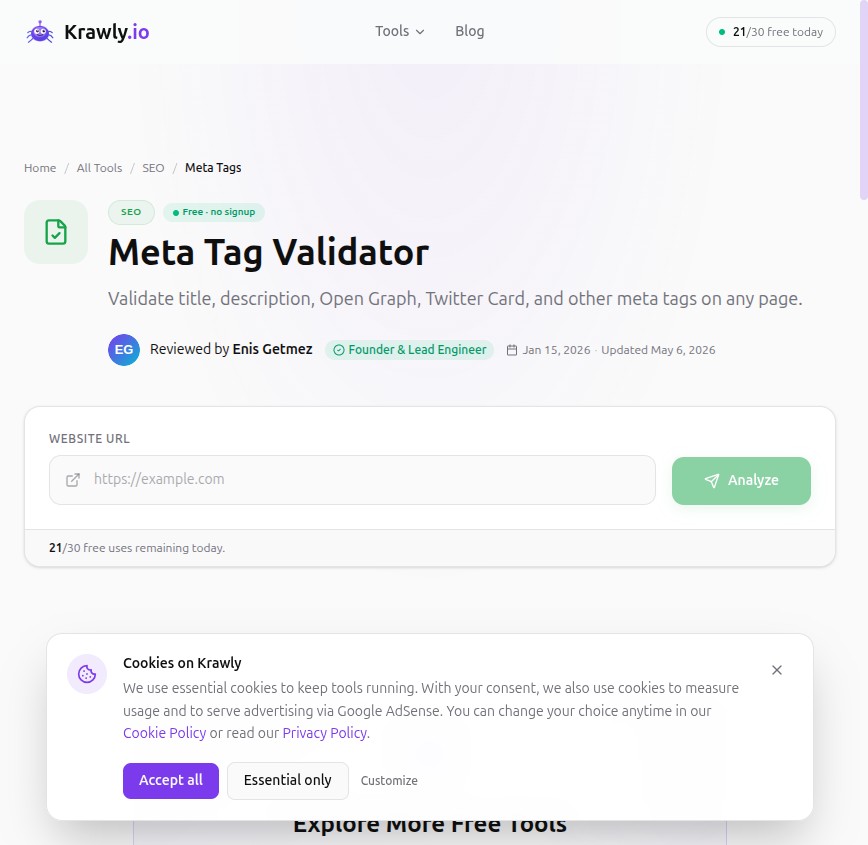

3. For the same top 10: run Meta Tag Validator on each. Fix anything flagged. Re-deploy. Re-measure in 2-4 weeks.

4. CrUX / Search Console Core Web Vitals: look at INP field data. If "poor" or "needs improvement", that's the next priority.

5. Structured Data Validator for any page with rich-snippet eligibility (product, article, FAQ, recipe, video). Fix specific field-level issues.

That's a real audit. It takes 2-4 hours per site. The output is not "your score went from 72 to 84"; it is a list of named pages with named issues and expected outcomes.

How to use SEO tool scores responsibly

Despite everything above, SEO tool scores aren't useless. They're useful for:

What they're not useful for: telling you whether you'll outrank a specific competitor, or predicting absolute rank position.

What I tell clients

If you're paying for an SEO tool primarily for the score, you're paying for the wrong feature. You want the score as a triage tool and the individual-issue surface area as the real product. The expensive parts of Ahrefs and SEMrush (backlink databases, keyword volumes, SERP tracking) are what you're actually paying for. The score is a free side product.

If you're not paying for an SEO tool — that's fine. Krawly's per-page tools, Google Search Console, and the Rich Results Test cover the diagnostic work for free. You give up the backlink databases and SERP tracking, which matter most when you're chasing high-competition keywords; below that level, free is fine.

Corrections welcomed

If you have data that contradicts any of the correlations above, I'd genuinely like to see it. Send to info@krawly.io — I'll update this article with a dated correction.

If you maintain an SEO tool I've criticised by name, also welcome — happy to revise based on documented methodology you'd like to share.